Copyright © 2003 IBM

Special Notices

The following terms are registered trademarks of International Business Machines corporation in the United States and/or other countries: AIX, OS/2, System/390. A full list of U.S. trademarks owned by IBM may be found at http://www.ibm.com/legal/copytrade.shtml.

Intel is a trademark or registered trademark of Intel Corporation in the United States, other countries, or both.

Windows is a trademark of Microsoft Corporation in the United States, other countries, or both.

Linux is a trademark of Linus Torvalds.

UNIX is a registered trademark of The Open Group in the United States and other countries.

Other company, product, and service names may be trademarks or service marks of others.

This document is provided "AS IS," with no express or implied warranties. Use the information in this document at your own risk.

License Information

This document may be reproduced or distributed in any form without prior permission provided the copyright notice is retained on all copies. Modified versions of this document may be freely distributed provided that they are clearly identified as such, and this copyright is included intact.

November 25, 2003

Table of Contents

- Preface

- 1. What is EVMS?

- 2. Using the EVMS interfaces

- 2.1. EVMS GUI

- 2.1.1. Using context sensitive and action menus

- 2.1.2. Saving changes

- 2.1.3. Refreshing changes

- 2.1.4. Using the GUI "+"

- 2.1.5. Using the accelerator keys

- 2.2. EVMS Ncurses interface

- 2.2.1. Navigating through EVMS Ncurses

- 2.2.2. Saving changes

- 2.3. EVMS Command Line Interpreter

- 2.3.1. Using the EVMS CLI

- 2.3.2. Notes on commands and command files

- 3. The EVMS log file and error data collection

- 4. Viewing compatibility volumes after migrating

- 4.1. Using the EVMS GUI

- 4.2. Using Ncurses

- 4.3. Using the CLI

- 5. Obtaining interface display details

- 5.1. Using the EVMS GUI

- 5.2. Using Ncurses

- 5.3. Using the CLI

- 6. Adding and removing a segment manager

- 6.1. When to add a segment manager

- 6.2. Types of segment managers

- 6.2.1. DOS Segment Manager

- 6.2.2. GUID Partitioning Table (GPT) Segment Manager

- 6.2.3. S/390 Segment Manager

- 6.2.4. Cluster segment manager

- 6.2.5. BSD segment manager

- 6.2.6. MAC segment manager

- 6.2.7. BBR segment manager

- 6.3. Adding a segment manager to an existing disk

- 6.4. Adding a segment manager to a new disk

- 6.5. Example: add a segment manager

- 6.5.1. Using the EVMS GUI

- 6.5.2. Using Ncurses

- 6.5.3. Using the CLI

- 6.6. Removing a segment manager

- 6.7. Example: remove a segment manager

- 6.7.1. Using the EVMS GUI context sensitive menu

- 6.7.2. Using Ncurses

- 6.7.3. Using the CLI

- 7. Creating segments

- 7.1. When to create a segment

- 7.2. Example: create a segment

- 7.2.1. Using the EVMS GUI

- 7.2.2. Using Ncurses

- 7.2.3. Using the CLI

- 8. Creating a container

- 8.1. When to create a container

- 8.2. Example: create a container

- 8.2.1. Using the EVMS GUI

- 8.2.2. Using Ncurses

- 8.2.3. Using the CLI

- 9. Creating regions

- 9.1. When to create regions

- 9.2. Example: create a region

- 9.2.1. Using the EVMS GUI

- 9.2.2. Using Ncurses

- 9.2.3. Using the CLI

- 10. Creating drive links

- 10.1. What is drive linking?

- 10.2. How drive linking is implemented

- 10.3. Creating a drive link

- 10.4. Example: create a drive link

- 10.4.1. Using the EVMS GUI

- 10.4.2. Using Ncurses

- 10.4.3. Using the CLI

- 10.5. Expanding a drive link

- 10.6. Shrinking a drive link

- 10.7. Deleting a drive link

- 11. Creating snapshots

- 11.1. What is a snapshot?

- 11.2. Creating and activating snapshot objects

- 11.2.1. Creating a snapshot

- 11.2.2. Activating a snapshot

- 11.3. Example: create a snapshot

- 11.3.1. Using the EVMS GUI

- 11.3.2. Using Ncurses

- 11.3.3. Using the CLI

- 11.4. Reinitializing a snapshot

- 11.4.1. Using the EVMS GUI or Ncurses

- 11.4.2. Using the CLI

- 11.5. Expanding a snapshot

- 11.5.1. Using the EVMS GUI or Ncurses

- 11.5.2. Using the CLI

- 11.6. Deleting a snapshot

- 11.7. Rolling back a snapshot

- 11.7.1. Using the EVMS GUI or Ncurses

- 11.7.2. Using the CLI

- 12. Creating volumes

- 12.1. When to create a volume

- 12.2. Example: create an EVMS native volume

- 12.2.1. Using the EVMS GUI

- 12.2.2. Using Ncurses

- 12.2.3. Using the CLI

- 12.3. Example: create a compatibility volume

- 12.3.1. Using the GUI

- 12.3.2. Using Ncurses

- 12.3.3. Using the CLI

- 13. FSIMs and file system operations

- 13.1. The FSIMs supported by EVMS

- 13.2. Example: add a file system to a volume

- 13.2.1. Using the EVMS GUI

- 13.2.2. Using Ncurses

- 13.2.3. Using the CLI

- 13.3. Example: check a file system

- 13.3.1. Using the EVMS GUI

- 13.3.2. Using Ncurses

- 13.3.3. Using the CLI

- 14. Clustering operations

- 14.1. Rules and restrictions for creating cluster containers

- 14.2. Example: create a private cluster container

- 14.2.1. Using the EVMS GUI

- 14.2.2. Using Ncurses

- 14.2.3. Using the CLI

- 14.3. Example: create a shared cluster container

- 14.3.1. Using the EVMS GUI

- 14.3.2. Using Ncurses

- 14.3.3. Using the CLI

- 14.4. Example: convert a private container to a shared container

- 14.4.1. Using the EVMS GUI

- 14.4.2. Using Ncurses

- 14.4.3. Using the CLI

- 14.5. Example: convert a shared container to a private container

- 14.5.1. Using the EVMS GUI

- 14.5.2. Using Ncurses

- 14.5.3. Using the CLI

- 14.6. Example: deport a private or shared container

- 14.6.1. Using the EVMS GUI

- 14.6.2. Using Ncurses

- 14.6.3. Using the CLI

- 14.7. Deleting a cluster container

- 14.8. Failover and Failback of a private container on Linux-HA

- 14.9. Remote configuration management

- 14.9.1. Using the EVMS GUI

- 14.9.2. Using Ncurses

- 14.9.3. Using the CLI

- 14.10. Forcing a cluster container to be imported

- 15. Converting volumes

- 15.1. When to convert volumes

- 15.2. Example: convert compatibility volumes to EVMS volumes

- 15.2.1. Using the EVMS GUI

- 15.2.2. Using Ncurses

- 15.2.3. Using the CLI

- 15.3. Example: convert EVMS volumes to compatibility volumes

- 15.3.1. Using the EVMS GUI

- 15.3.2. Using Ncurses

- 15.3.3. Using the CLI

- 16. Expanding and shrinking volumes

- 16.1. Why expand and shrink volumes?

- 16.2. Example: shrink a volume

- 16.2.1. Using the EVMS GUI

- 16.2.2. Using Ncurses

- 16.2.3. Using the CLI

- 16.3. Example: expand a volume

- 16.3.1. Using the EVMS GUI

- 16.3.2. Using Ncurses

- 16.3.3. Using the CLI

- 17. Adding features to an existing volume

- 17.1. Why add features to a volume?

- 17.2. Example: add drive linking to an existing volume

- 17.2.1. Using the EVMS GUI

- 17.2.2. Using Ncurses

- 17.2.3. Using the CLI

- 18. Plug-in operations tasks

- 18.1. What are plug-in tasks?

- 18.2. Example: complete a plug-in operations task

- 18.2.1. Using the EVMS GUI

- 18.2.2. Using Ncurses

- 18.2.3. Using the CLI

- 19. Deleting objects

- 19.1. How to delete objects: delete and delete recursive

- 19.2. Example: perform a delete recursive operation

- 19.2.1. Using the EVMS GUI

- 19.2.2. Using Ncurses

- 19.2.3. Using the CLI

- 20. Replacing objects

- 20.1. What is object-replace?

- 20.2. Replacing a drive-link child object

- 20.2.1. Using the EVMS GUI or Ncurses

- 20.2.2. Using the CLI

- 21. Moving segment storage objects

- Appendix A. The DOS plug-in

- Appendix B. The MD region manager

- Appendix C. The LVM plug-in

- C.1. How LVM is implemented

- C.2. Container operations

- C.3. Region operations

- C.3.1. Creating LVM regions

- C.3.2. Expanding LVM regions

- C.3.3. Shrinking LVM regions

- C.3.4. Deleting LVM regions

- C.3.5. Moving LVM regions

- Appendix D. The CSM plug-in

- Appendix E. JFS file system interface module

- Appendix F. XFS file system interface module

- Appendix G. ReiserFS file system interface module

- Appendix H. Ext-2/3 file system interface module

List of Figures

This guide tells how to configure and manage Enterprise Volume Management System (EVMS). EVMS is a storage management program that provides a single framework for managing and administering your system's storage.

This guide is intended for Linux system administrators and users who are responsible for setting up and maintaining EVMS.

For additional information about EVMS or to ask questions specific to your distribution, refer to the EVMS mailing lists. You can view the list archives or subscribe to the lists from the EVMS Project web site.

The following table shows how this guide is organized:

Table 1. Organization of the EVMS User Guide

| Chapter or appendix title | Contents |

|---|---|

| 1. What is EVMS? | Discusses general EVMS concepts and terms. |

| 2. Using the EVMS interfaces | Describes the three EVMS user interfaces and how to use them. |

| 3. The EVMS log file and error data collection | Discusses the EVMS information and error log file and explains how to change the logging level. |

| 4. Viewing compatibility volumes after migrating | Tells how to view existing files that have been migrated to EVMS. |

| 5. Obtaining interface display details | Tells how to view detailed information about EVMS objects. |

| 6. Adding and removing a segment manager | Discusses segments and explains how to add and remove a segment manager. |

| 7. Creating segments | Explains when and how to create segments. |

| 8. Creating containers | Discusses containers and explains when and how to create them. |

| 9. Creating regions | Discusses regions and explains when and how to create them. |

| 10. Creating drive links | Discusses the drive linking feature and tells how to create a drive link. |

| 11. Creating snapshots | Discusses snapshotting and tells how to create a snapshot. |

| 12. Creating volumes | Explains when and how to create volumes. |

| 13. FSIMs and file system operations | Discusses the standard FSIMs shipped with EVMS and provides examples of adding file systems and coordinating file checks with the FSIMs. |

| 14. Clustering operations | Describes EVMS clustering and how to create private and shared containers. |

| 15. Converting volumes | Explains how to convert EVMS native volumes to compatibility volumes and compatibility volumes to EVMS native volumes. |

| 16. Expanding and shrinking volumes | Tells how to expand and shrink EVMS volumes with the various EVMS user interfaces. |

| 17. Adding features to an existing volume | Tells how to add additional features, such as drive linking and bad block relocation, to an existing volume. |

| 18. Plug-in operations tasks | Discusses the plug-in tasks that are available within the context of a particular plug-in. |

| 19. Deleting objects | Tells how to safely delete EVMS objects. |

| 20. Replacing objects | Tells how to change the configuration of a volume or storage object. |

| 21. Moving segment storage objects | Discusses how to use the move function for moving segments. |

| A. The DOS link plug-in | Provides details about the DOS link plug-in, which is a segment manager plug-in. |

| B. The MD region manager | Explains the Multiple Disks (MD) support in Linux that is a software implementation of RAID. |

| C. The LVM plug-in | Tells how the LVM plug-in is implemented and how to perform container operations. |

| D. The CSM plug-in | Explains how the Cluster Segment Manager (CSM) plug-in is implemented and how to perform CSM operations. |

| E. JFS file system interface module | Provides information about the JFS FSIM. |

| F. XFS file system interface module | Provides information about the XFS FSIM. |

| G. ReiserFS file system interface module | Provides information about the ReiserFS FSIM. |

| H. Ext-2/3 file system interface module | Provides information about the Ext-2/3 FSIM. |

EVMS brings a new model of volume management to Linux®. EVMS integrates all aspects of volume management, such as disk partitioning, Linux logical volume manager (LVM) and multi-disk (MD) management, OS2 and AIX volume managers, and file system operations into a single cohesive package. With EVMS, various volume management technologies are accessible through one interface, and new technologies can be added as plug-ins as they are developed.

EVMS lets you manage storage space in a way that is more intuitive and flexible than many other Linux volume management systems. Practical tasks, such as migrating disks or adding new disks to your Linux system, become more manageable with EVMS because EVMS can recognize and read from different volume types and file systems. EVMS provides additional safety controls by not allowing commands that are unsafe. These controls help maintain the integrity of the data stored on the system.

You can use EVMS to create and manage data storage. With EVMS, you can use multiple volume management technologies under one framework while ensuring your system still interacts correctly with stored data. With EVMS, you are can use bad block relocation, shrink and expand volumes, create snapshots of your volumes, and set up RAID (redundant array of independent devices) features for your system. You can also use many types of file systems and manipulate these storage pieces in ways that best meet the needs of your particular work environment.

EVMS also provides the capability to manage data on storage that is physically shared by nodes in a cluster. This shared storage allows data to be highly available from different nodes in the cluster.

There are currently three user interfaces available for EVMS: graphical (GUI), text mode (Ncurses), and the Command Line Interpreter (CLI). Additionally, you can use the EVMS Application Programming Interface to implement your own customized user interface.

Table 1.1 tells more about each of the EVMS user interfaces.

Table 1.1. EVMS user interfaces

| User interface | Typical user | Types of use | Function |

|---|---|---|---|

| GUI | All | All uses except automation | Allows you to choose from available options only, instead of having to sort through all the options, including ones that are not available at that point in the process. |

| Ncurses | Users who don't have GTK libraries or X Window Systems on their machines | All uses except automation | Allows you to choose from available options only, instead of having to sort through all the options, including ones that are not available at that point in the process. |

| Command Line | Expert | All uses | Allows easy automation of tasks |

To avoid confusion with other terms that describe volume management in general, EVMS uses a specific set of terms. These terms are listed, from most fundamental to most comprehensive, as follows:

- Logical disk

Representation of anything EVMS can access as a physical disk. In EVMS, physical disks are logical disks.

- Sector

The lowest level of addressability on a block device. This definition is in keeping with the standard meaning found in other management systems.

- Disk segment

An ordered set of physically contiguous sectors residing on the same storage object. The general analogy for a segment is to a traditional disk partition, such as DOS or OS/2 ®

- Storage region

An ordered set of logically contiguous sectors that are not necessarily physically contiguous.

- Storage object

Any persistent memory structure in EVMS that can be used to build objects or create a volume. Storage object is a generic term for disks, segments, regions, and feature objects.

- Storage container

A collection of storage objects. A storage container consumes one set of storage objects and produces new storage objects. One common subset of storage containers is volume groups, such as AIX® or LVM.

Storage containers can be either of type private or cluster.

- Cluster storage container

Specialized storage containers that consume only disk objects that are physically accessible from all nodes of a cluster.

- Private storage container

A collection of disks that are physically accessible from all nodes of a cluster, managed as a single pool of storage, and owned and accessed by a single node of the cluster at any given time.

- Shared storage container

A collection of disks that are physically accessible from all nodes of a cluster, managed as a single pool of storage, and owned and accessed by all nodes of the cluster simultaneously.

- Deported storage container

A shared cluster container that is not owned by any node of the cluster.

- Feature object

A storage object that contains an EVMS native feature, such as bad block relocation.

An EVMS Native Feature is a function of volume management designed and implemented by EVMS. These features are not intended to be backward compatible with other volume management technologies.

- Logical volume

A volume that consumes a storage object and exports something mountable. There are two varieties of logical volumes: EVMS Volumes and Compatibility volumes.

EVMS Volumes contain EVMS native metadata and can support all EVMS features. /dev/evms/my_volume would be an example of an EVMS Volume.

Compatibility volumes do not contain any EVMS native metadata. Compatibility volumes are backward compatible to their particular scheme, but they cannot support EVMS features. /dev/evms/md/md0 would be an example of a compatibility volume.

There are numerous drivers in the Linux kernel, such as Device Mapper and MD (software RAID), that implement volume management schemes. EVMS is built on top of these drivers to provide one framework for combining and accessing the capabilities.

The EVMS Engine handles the creation, configuration, and management of volumes, segments, and disks. The EVMS Engine is a programmatic interface to the EVMS system. User interfaces and programs that use EVMS must go through the Engine.

EVMS provides the capacity for plug-in modules to the Engine that allow EVMS to perform specialized tasks without altering the core code. These plug-in modules allow EVMS to be more extensible and customizable than other volume management systems.

EVMS defines a layered architecture where plug-ins in each layer create abstractions of the layer or layers below. EVMS also allows most plug-ins to create abstractions of objects within the same layer. The following list defines these layers from the bottom up.

- Device managers

The first (bottom) layer consists of device managers. These plug-ins communicate with hardware device drivers to create the first EVMS objects. Currently, all devices are handled by a single plug-in. Future releases of EVMS might need additional device managers for network device management (for example, to manage disks on a storage area network (SAN)).

- Segment managers

The second layer consists of segment managers. These plug-ins handle the segmenting, or partitioning, of disk drives. The Engine components can replace partitioning programs, such as fdisk and Disk Druid, and EVMS uses Device Mapper to replace the in-kernel disk partitioning code. Segment managers can also be "stacked," meaning that one segment manager can take as input the output from another segment manager.

EVMS provides the following segment managers: DOS, GPT, System/390® (S/390), Cluster, and BSD. Other segment manager plug-ins can be added to support other partitioning schemes.

- Region managers

The third layer consists of region managers. This layer provides a place for plug-ins that ensure compatibility with existing volume management schemes in Linux and other operating systems. Region managers are intended to model systems that provide a logical abstraction above disks or partitions.

Like segment managers, region managers can also be stacked. Therefore, the input object(s) to a region manager can be disks, segments, or other regions.

There are currently four region manager plug-ins in EVMS: Linux LVM, AIX, OS/2, and Multi-Disk (MD).

- Linux LVM

The Linux LVM plug-in provides compatibility with the Linux LVM and allows the creation of volume groups (known in EVMS as containers) and logical volumes (known in EVMS as regions).

- AIX LVM

The AIX LVM provides compatibility with AIX and is similar in functionality to the Linux LVM by also using volume groups and logical volumes.

- OS/2 LVM

The OS/2 plug-in provides compatibility with volumes created under OS/2. Unlike the Linux and AIX LVMs, the OS/2 LVM is based on linear linking of disk partitions, as well as bad-block relocation. The OS/2 LVM does not allow for modifications.

- MD

The Multi-Disk (MD) plug-in for RAID provides RAID levels linear, 0, 1, 4, and 5 in software. MD is one plug-in that displays as four region managers that you can choose from.

- EVMS features

The next layer consists of EVMS features. This layer is where new EVMS-native functionality is implemented. EVMS features can be built on any object in the system, including disks, segments, regions, or other feature objects. All EVMS features share a common type of metadata, which makes discovery of feature objects much more efficient, and recovery of broken features objects much more reliable. There are three features currently available in EVMS: drive linking, Bad Block Relocation, and snapshotting.

- Drive Linking

Drive linking allows any number of objects to be linearly concatenated together into a single object. A drive linked volume can be expanded by adding another storage object to the end or shrunk by removing the last object.

- Bad Block Relocation

Bad Block Relocation (BBR) monitors its I/O path and detects write failures (which can be caused by a damaged disk). In the event of such a failure, the data from that request is stored in a new location. BBR keeps track of this remapping. Additional I/Os to that location are redirected to the new location.

- Snapshotting

The Snapshotting feature provides a mechanism for creating a "frozen" copy of a volume at a single instant in time, without having to take that volume off-line. This is useful for performing backups on a live system. Snapshots work with any volume (EVMS or compatibility), and can use any other available object as a backing store. After a snapshot is created and made into an EVMS volume, writes to the "original" volume cause the original contents of that location to be copied to the snapshot's storage object. Reads to the snapshot volume look like they come from the original at the time the snapshot was created.

- File System Interface Modules

File System Interface Modules (FSIMs) provide coordination with the file systems during certain volume management operations. For instance, when expanding or shrinking a volume, the file system must also be expanded or shrunk to the appropriate size. Ordering in this example is also important; a file system cannot be expanded before the volume, and a volume cannot be shrunk before the file system. The FSIMs allow EVMS to ensure this coordination and ordering.

FSIMs also perform file system operations from one of the EVMS user interfaces. For instance, a user can make new file systems and check existing file systems by interacting with the FSIM.

- Cluster Manager Interface Modules

Cluster Manager Interface Modules, also known as the EVMS Clustered Engine (ECE), interface with the local cluster manager installed on the system. The ECE provides a standardized ECE API to the Engine while hiding cluster manager details from the Engine.

This chapter explains how to use the EVMS GUI, Ncurses, and CLI interfaces. This chapter also includes information about basic navigation and commands available through the CLI.

The EVMS GUI is a flexible and easy-to-use interface for administering volumes and storage objects. Many users find the EVMS GUI easy to use because it displays which storage objects, actions, and plug-ins are acceptable for a particular task.

The EVMS GUI lets you accomplish most tasks in one of two ways: context sensitive menus or the Actions menu.

Context sensitive menus are available from any of the main "views." Each view corresponds to a page in a notebook widget located on the EVMS GUI main window. These views are made up of different trees or lists that visually represent the organization of different object types, including volumes, feature objects, regions, containers, segments, or disks.

You can view the context sensitive menu for an object by right-clicking on that object. The actions that are available for that object display on the screen. The GUI will only present actions that are acceptable for the selected object at that point in the process. These actions are not always a complete set.

To use the Actions menu, choose Action-><the action you want to accomplish>-><options>. The Actions menu provides a more guided path for completing a task than do context sensitive menus. The Actions option is similar to the wizard or druid approach used by many GUI applications.

All of the operations you need to perform as an administrator are available through the Actions menu.

All of the changes that you make while in the EVMS GUI are only in memory until you save the changes. In order to make your changes permanent, you must save all changes before exiting. If you forget to save the changes and decide to exit or close the EVMS GUI, you are reminded to save any pending changes.

To explicitly save all the changes you made, select Action->Save, and click the Save button.

The Refresh button updates the view and allows you to see changes, like mount points, that might have changed outside of the GUI.

Along the left hand side of the panel views in the GUI is a "+" that resides beside each item. When you click the "+," the objects that are included in the item are displayed. If any of the objects that display also have a "+" beside them, you can expand them further by clicking on the "+" next to each object name.

You can avoid using a mouse for navigating the EVMS GUI by using a series of key strokes, or "accelerator keys," instead. The following sections tell how to use accelerator keys in the EVMS Main Window, the Selection Window, and the Configuration Options Window.

In the Main Window view, use the following keys to navigate:

Table 2.1. Accelerator keys in the Main Window

| Left and right arrow keys | Navigate between the notebook tabs of the different views. |

| Down arrow and Spacebar | Bring keyboard focus into the view. |

While in a view, use the following keys to navigate:

Table 2.2. Accelerator keys in the views

| up and down arrows | Allow movement around the window. |

| "+" | Opens an object tree. |

| "-" | Collapses an object tree. |

| ENTER | Brings up the context menu (on a row). |

| Arrows | Navigate a context menu. |

| ENTER | Activates an item. |

| ESC | Dismisses the context menu. |

| Tab | Gets you out of the view and moves you back up to the notebook tab. |

To access the action bar menu, press Alt and then the underlined accelerator key for the menu choice (for example, "A" for the Actions dropdown menu).

In a dropdown menu, you can use the up and down arrows to navigate. You could also just type the accelerator key for the menu item, which is the character with the underscore. For example, to initiate a command to delete a container, type Alt + "A" + "D" + "C."

Ctrl-S is a shortcut to initiate saving changes. Ctrl-Q is a shortcut to initiate quitting the EVMS GUI.

A selection window typically contains a selection list, plus four to five buttons below it. Use the following keys to navigate in the selection window:

Table 2.3. Accelerator keys in the selection window

| Tab | Navigates (changes keyboard focus) between the list and the buttons. |

| Up and down arrows | Navigates within the selection list. |

| Spacebar | Selects and deselects items in the selection list. |

| Enter on the button or type the accelerator character (if one exists) | Activates a button |

Use the following keys to navigate in the configuration options window:

Table 2.4. Accelerator keys in the configuration options window

| Tab | Cycles focus between fields and buttons |

| Left and right arrows | Navigate the folder tabs if the window has a widget notebook. |

| Spacebar or the down arrow | Switches focus to a different notebook page. |

| Enter or type the accelerator character (if one exists) | Activates a button |

For widgets, use the following keys to navigate:

Table 2.5. Widget navigation keys in the configuration options window

| Tab | Cycles forward through a set of widgets |

| Shift-Tab | Cycles backward through a set of widgets. |

The widget navigation, selection, and activation is the same in all dialog windows.

The EVMS Ncurses (evmsn) user interface is a menu-driven interface with characteristics similar to those of the EVMS GUI. Like the EVMS GUI, evmsn can accommodate new plug-ins and features without requiring any code changes.

The EVMS Ncurses user interface allows you to manage volumes on systems that do not have the X and GTK+ libraries that are required by the EVMS GUI.

The EVMS Ncurses user interface initially displays a list of logical volumes similar to the logical volumes view in the EVMS GUI. Ncurses also provides a menu bar similar to the menu bar in the EVMS GUI.

A general guide to navigating through the layout of the Ncurses window is listed below:

Tab cycles you through the available views.

Status messages and tips are displayed on the last line of the screen.

Typing the accelerator character (the letter highlighted in red) for any menu item activates that item. For example, typing A in any view brings down the Actions menu.

Typing A + Q in a view quits the application.

Typing A + S in a view saves changes made during an evmsn session.

Use the up and down arrows to highlight an object in a view. Pressing Enter while an object in a view is highlighted presents a context popup menu.

Dismiss a context popup menu by pressing Esc or by selecting a menu item with the up and down arrows and pressing Enter to activate the menu item.

Dialog windows are similar in design to the EVMS GUI dialogs, which allow a user to navigate forward and backward through a series of dialogs using Next and Previous. A general guide to dialog windows is listed below:

Tab cycles you through the available buttons. Note that some buttons might not be available until a valid selection is made.

The left and right arrows can also be used to move to an available button.

Navigate a selection list with the up and down arrows.

Toggle the selection of an item in a list with spacebar.

Activate a button that has the current focus with Enter. If the button has an accelerator character (highlighted in red), you can also activate the button by typing the accelerator character regardless of whether the button has the current focus.

The EVMS Ncurses user interface, like the EVMS GUI, provides context menus for actions that are available only to the selected object in a view. Ncurses also provides context menus for items that are available from the Actions menu. These context menus present a list of commands available for a certain object.

All changes you make while in the EVMS Ncurses are only in memory until you save the changes. In order to make the changes permanent, save all changes before exiting. If you forget to save the changes and decide to exit the EVMS Ncurses interface, you will be reminded of the unsaved changes and be given the chance to save or discard the changes before exiting.

To explicitly save all changes, press A + S and confirm that you want to save changes.

The EVMS Command Line Interpreter (EVMS CLI) provides a command-driven user interface for EVMS. The EVMS CLI helps automate volume management tasks and provides an interactive mode in situations where the EVMS GUI is not available.

Because the EVMS CLI is an interpreter, it operates differently than command line utilities for the operating system. The options you specify on the EVMS CLI command line to invoke the EVMS CLI control how the EVMS CLI operates. For example, the command line options tell the CLI where to go for commands to interpret and how often the EVMS CLI must commit changes to disk. When invoked, the EVMS CLI prompts for commands.

The volume management commands the EVMS CLI understands are specified in the /usr/src/evms-2.2.0/engine2/ui/cli/grammar.ps file that accompanies the EVMS package. These commands are described in detail in the EVMS man page, and help on these commands is available from within the EVMS CLI.

Use the evms command to start the EVMS CLI. If you do not enter an option with evms, the EVMS CLI starts in interactive mode. In interactive mode, the EVMS CLI prompts you for commands. The result of each command is immediately saved to disk. The EVMS CLI exits when you type exit. You can modify this behavior by using the following options with evms:

- -b

This option indicates that you are running in batch mode and anytime there is a prompt for input from the user, the default value is accepted automatically. This is the default behavior with the -f option.

- -c

This option commits changes to disk only when EVMS CLI exits, not after each command.

- -f filename

This option tells the EVMS CLI to use filename as the source of commands. The EVMS CLI exits when it reaches the end of filename.

- -p

This option only parses commands; it does not execute them. When combined with the -f option, the -p option detects syntax errors in command files.

- -h

This option displays help information for options used with the evms command.

- -rl

This option tells the CLI that all remaining items on the command line are replacement parameters for use with EVMS commands.

NOTE

Replacement parameters are accessed in EVMS commands using the $(x) notation, where x is the number identifying which replacement parameter to use. Replacement parameters are assigned numbers (starting with 1) as they are encountered on the command line. Substitutions are not made within comments or quoted strings.

An example would be:

evms -c -f testcase -rl sda sdb

sda is the replacement for parameter1 and sdb is the replacement for parameter2

NOTE

Information on less commonly used options is available in the EVMS man page.

The EVMS CLI allows multiple commands to be displayed on a command line. When you specify multiple commands on a single command line, separate the commands with a colon ( : ). This is important for command files because the EVMS CLI sees a command file as a single long command line. The EVMS CLI has no concept of lines in the file and ignores spaces. These features allow a command in a command file to span several lines and use whatever indentation or margins that are convenient. The only requirement is that the command separator (the colon) be present between commands.

The EVMS CLI ignores spaces unless they occur within quote marks. Place in quotation marks a name that contains spaces or other non-printable or control characters. If the name contains a quotation mark as part of the name, the quotation mark must be "doubled," as shown in the following example:

"This is a name containing ""embedded"" quote marks." |

EVMS CLI keywords are not case sensitive, but EVMS names are case sensitive. Sizes can be input in any units with a unit label, such as KB, MB, GB, or TB.

Finally, C programming language style comments are supported by the EVMS CLI. Comments can begin and end anywhere except within a quoted string, as shown in the following example:

/* This is a comment */ Create:Vo/*This is a silly place for a comment, but it is allowed.*/lume,"lvm/Sample Container/My LVM Volume",compatibility |

This chapter discusses the EVMS information and error log file and the various logging levels. It also explains how to change the logging level.

The EVMS Engine creates a log file called /var/log/evmsEngine.log every time the Engine is opened. The Engine also saves copies of up to 10 previous Engine sessions in the files /var/log/evmsEngine.n.log, where n is the number of the session between 1 and 10.

There are several possible logging levels that you can choose to be collected in /var/log/evmsEngine.log. The "lowest" logging level, critical, collects only messages about serious system problems, whereas the "highest" level, everything, collects all logging related messages. When you specify a particular logging level, the Engine collects messages for that level and all the levels below it.

The following table lists the allowable log levels and the information they provide:

Table 3.1. EVMS logging levels

| Level name | Description |

|---|---|

| Critical | The health of the system or the Engine is in jeopardy; for example, an operation has failed because there is not enough memory. |

| Serious | An operation did not succeed. |

| Error | The user has caused an error. The error messages are provided to help the user correct the problem. |

| Warning | An error has occurred that the system might or might not be able to work around. |

| Default | An error has occurred that the system has already worked around. |

| Details | Detailed information about the system. |

| Debug | Information that helps the user debug a problem. |

| Extra | More information that helps the user debug a problem than the "Debug" level provides. |

| Entry_Exit | Traces the entries and exits of functions. |

| Everything | Verbose output. |

By default, when any of the EVMS interfaces is opened, the Engine logs the Default level of messages into the /var/log/evmsEngine.log file. However, if your system is having problems and you want to see more of what is happening, you can change the logging level to be higher; if you want fewer logging messages, you can change the logging level to be lower. To change the logging level, specify the -d parameter and the log level on the interface open call. The following examples show how to open the various interfaces with the highest logging level (everything):

GUI: evmsgui -d everything |

Ncurses: evmsn -d everything |

CLI: evms -d everything |

NOTE

If you use the EVMS mailing list for help with a problem, providing to us the log file that is created when you open one of the interfaces (as shown in the previous commands) makes it easier for us to help you.

The EVMS GUI lets you change the logging level during an Engine session. To do so, follow these steps:

Select Settings->Log Level->Engine.

Click the Level you want.

The CLI command, probe, opens and closes the Engine, which causes a new log to start. The log that existed before the probe command was issued is renamed /var/log/evmsEngine.1.log and the new log is named /var/log/evmsEngine.log.

Migrating to EVMS allows you to have the flexibility of EVMS without losing the integrity of your existing data. EVMS discovers existing volume management volumes as compatibility volumes. After you have installed EVMS, you can view your existing volumes with the interface of your choice.

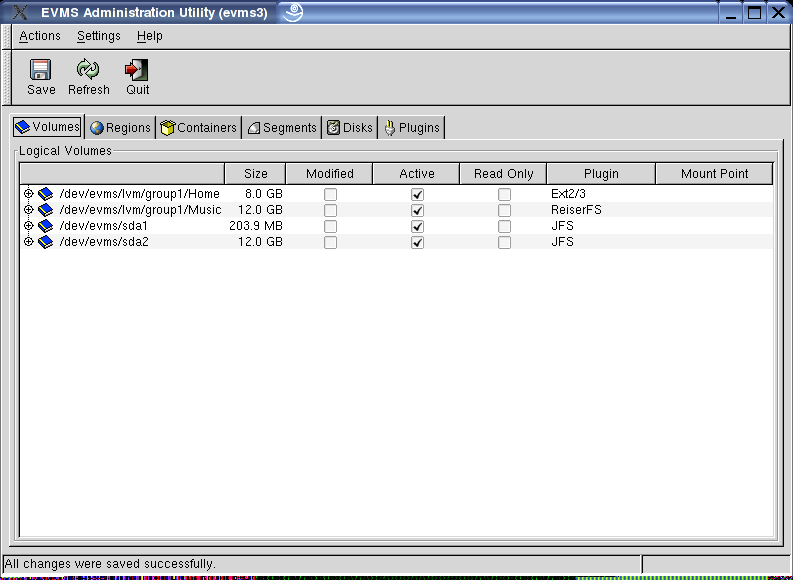

If you are using the EVMS GUI as your preferred interface, you can view your migrated volumes by typing evmsgui at the command prompt. The following window opens, listing your migrated volumes.

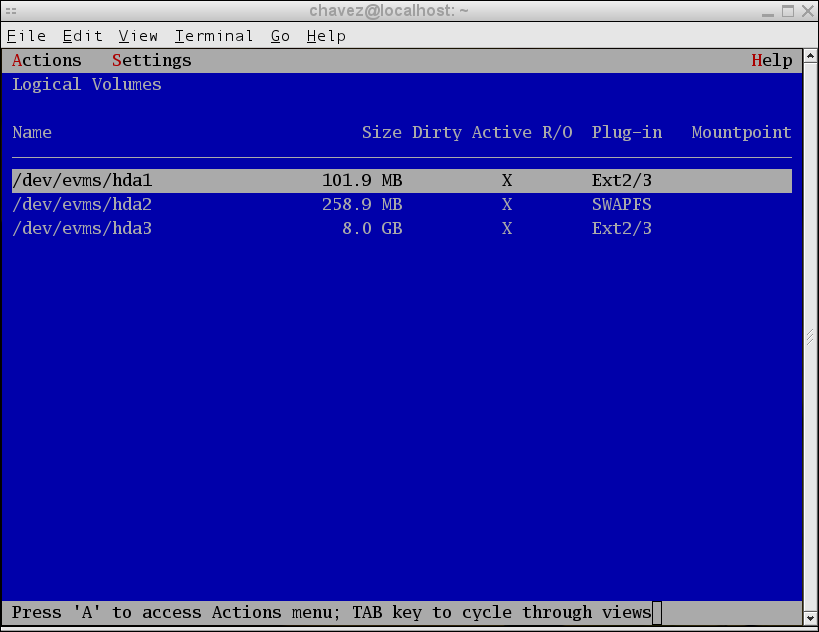

If you are using the Ncurses interface, you can view your migrated volumes by typing evmsn at the command prompt. The following window opens, listing your migrated volumes.

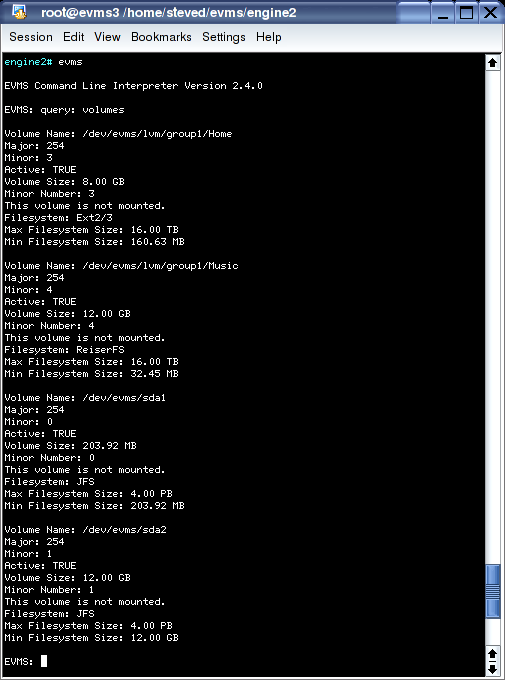

If you are using the Command Line Interpreter (CLI) interface, you can view your migrated volumes by following these steps:

Start the Command Line Interpreter by typing evms at the command line.

Query the volumes by typing the following at the EVMS prompt:

query:volumes

Your migrated volumes are displayed as results of the query.

The EVMS interfaces let you view more detailed information about an EVMS object than what is readily available from the main views of the EVMS user interfaces. The type and extent of additional information available is dependent on the interface you use. For example, the EVMS GUI provides more in-depth information than does the CLI.

The following sections show how to find detailed information on the region lvm/Sample Container/Sample Region, which is part of volume /dev/evms/Sample Volume (created in section 10.2).

With the EVMS GUI, it is only possible to display additional details on an object through the Context Sensitive Menus, as shown in the following steps:

Looking at the volumes view, click the "+" next to volume /dev/evms/Sample Volume. Alternatively, look at the regions view.

Right click lvm/Sample Container/Sample Region.

Point at Display Details... and click. A new window opens with additional information about the selected region.

Click More by the Logical Extents box. Another window opens that displays the mappings of logical extents to physical extents.

Follow these steps to display additional details on an object with Ncurses:

Press Tab to reach the Storage Regions view.

Scroll down using the down arrow until lvm/Sample Container/Sample Region is highlighted.

Press Enter.

In the context menu, scroll down using the down arrow to highlight "Display Details..."

Press Enter to activate the menu item.

In the Detailed Information dialog, use the down arrow to highlight the "Logical Extents" item and then use spacebar to open another window that displays the mappings of logical extents to physical extents.

Use the query command (abbreviated q) with filters to display details about EVMS objects. There are two filters that are especially helpful for navigating within the command line: list options (abbreviated lo) and extended info (abbreviated ei).

The list options command tells you what can currently be done and what options you can specify. To use this command, first build a traditional query command starting with the command name query, followed by a colon (:), and then the type of object you want to query (for example, volumes, objects, plug-ins). Then, you can use filters to narrow the search to only the area you are interested in. For example, to determine the acceptable actions at the current time on lvm/Sample Container/Sample Region, enter the following command:

query: regions, region="lvm/Sample Container/Sample Region", list options |

The extended info filter is the equivalent of Display Details in the EVMS GUI and Ncurses interfaces. The command takes the following form: query, followed by a colon (:), the filter (extended info), a comma (,), and the object you want more information about. The command returns a list containing the field names, titles, descriptions and values for each field defined for the object. For example, to obtain details on lvm/Sample Container/Sample Region, enter the following command:

query: extended info, "lvm/Sample Container/Sample Region" |

Many of the field names that are returned by the extended info filter can be expanded further by specifying the field name or names at the end of the command, separated by commas. For example, if you wanted additional information about logical extents, the query would look like the following:

query: extended info, "lvm/Sample Container/Sample Region", Extents |

This chapter discusses when to use a segment manager, what the different types of segment managers are, how to add a segment manager to a disk, and how to remove a segment manager.

Adding a segment manager to a disk allows the disk to be subdivided into smaller storage objects called disk segments. The add command causes a segment manager to create appropriate metadata and expose freespace that the segment manager finds on the disk. You need to add segment managers when you have a new disk or when you are switching from one partitioning scheme to another.

EVMS displays disk segments as the following types:

Data: a set of contiguous sectors that has been allocated from a disk and can be used to construct a volume or object.

Freespace: a set of contiguous sectors that are unallocated or not in use. Freespace can be used to create a segment.

Metadata: a set of contiguous sectors that contain information needed by the segment manager.

There are seven types of segment managers in EVMS: DOS, GPT, S/390, Cluster, BSD, MAC, and BBR.

The most commonly used segment manager is the DOS Segment Manager. This plug-in provides support for traditional DOS disk partitioning. The DOS Segment Manager also recognizes and supports the following variations of the DOS partitioning scheme:

OS/2: an OS/2 disk has additional metadata sectors that contain information needed to reconstruct disk segments.

Embedded partitions: support for BSD, SolarisX86, and UnixWare is sometimes found embedded in primary DOS partitions. The DOS Segment Manager recognizes and supports these slices as disk segments.

The GUID Partitioning Table (GPT) Segment Manager handles the new GPT partitioning scheme on IA-64 machines. The Intel Extensible Firmware Interface Specification requires that firmware be able to discover partitions and produce logical devices that correspond to disk partitions. The partitioning scheme described in the specification is called GPT due to the extensive use of Globally Unique Identifier (GUID) tagging. GUID is a 128 bit long identifier, also referred to as a Universally Unique Identifier (UUID). As described in the Intel Wired For Management Baseline Specification, a GUID is a combination of time and space fields that produce an identifier that is unique across an entire UUID space. These identifiers are used extensively on GPT partitioned disks for tagging entire disks and individual partitions. GPT partitioned disks serve several functions, such as:

keeping a primary and backup copy of metadata

replacing msdos partition nesting by allowing many partitions

using 64 bit logical block addressing

tagging partitions and disks with GUID descriptors

The GPT Segment Manager scales better to large disks. It provides more redundancy with added reliability and uses unique names. However, the GPT Segment Manager is not compatible with DOS, OS/2, or Windows®.

The S/390 Segment Manager is used exclusively on System/390 mainframes. The S/390 Segment Manager has the ability to recognize various disk layouts found on an S/390 machine, and provide disk segment support for this architecture. The two most common disk layouts are Linux Disk Layout (LDL) and Common Disk Layout (CDL).

The principle difference between LDL and CDL is that an LDL disk cannot be further subdivided. An LDL disk will produce a single metadata disk segment and a single data disk segment. There is no freespace on an LDL disk, and you cannot delete or re-size the data segment. A CDL disk can be subdivided into multiple data disk segments because it contains metadata that is missing from an LDL disk, specifically the Volume Table of Contents (vtoc) information.

The S/390 Segment Manager is the only segment manager plug-in capable of understanding the unique S/390 disk layouts. The S/390 Segment Manager cannot be added or removed from a disk.

The cluster segment manager (CSM) supports high availability clusters. When the CSM is added to a shared storage disk, it writes metadata on the disk that:

provides a unique disk ID (guid)

names the EVMS container the disk will reside within

specifies the cluster node (nodeid) that owns the disk

specifies the HA cluster (clusterid)

This metadata allows the CSM to build containers for supporting failover situations. It does so by constructing an EVMS container object that consumes all shared objects discovered by the CSM and belonging to the same container. These shared storage objects are consumed by the container. A single segment object is produced by the container for each consumed storage object. A failover of the EVMS resource is then accomplished by simply reassigning the container to the standby cluster node and having that node re-run its discovery process.

BSD refers to the Berkeley Software Distribution UNIX® operating system. The EVMS BSD segment manager is responsible for recognizing and producing EVMS segment storage objects that map BSD partitions. A BSD disk may have a slice table in the very first sector on the disk for compatibility purposes with other operating systems. For example, a DOS slice table might be found in the usual MBR sector. The BSD disk would then be found within a disk slice that is located using the compatibility slice table. However, BSD has no need for the slice table and can fully dedicate the disk to itself by placing the disk label in the very first sector. This is called a "fully dedicated disk" because BSD uses the entire disk and does not provide a compatibility slice table. The BSD segment manager recognizes such "fully dedicated disks" and provides mappings for the BSD partitions.

Apple-partitioned disks use a disk label that is recognized by the MAC segment manager. The MAC segment manager recognizes the disk label during discovery and creates EVMS segments to map the MacOS disk partitions.

The bad block replacement (BBR) segment manager enhances the reliability of a disk by remapping bad storage blocks. When BBR is added to a disk, it writes metadata on the disk that:

reserves replacement blocks

maps bad blocks to reserved blocks

Bad blocks occur when an I/O error is detected for a write operation. When this happens, I/O normally fails and the failure code is returned to the calling program code. BBR detects failed write operations and remaps the I/O to a reserved block on the disk. Afterward, BBR restarts the I/O using the reserve block.

Every block of storage has an address, called a logical block address, or LBA. When BBR is added to a disk, it provides two critical functions: remap and recovery. When an I/O operation is sent to disk, BBR inspects the LBA in the I/O command to see if the LBA has been remapped to a reserve block due to some earlier I/O error. If BBR finds a mapping between the LBA and a reserve block, it updates the I/O command with the LBA of the reserve block before sending it on to the disk. Recovery occurs when BBR detects an I/O error and remaps the bad block to a reserve block. The new LBA mapping is saved in BBR metadata so that subsequent I/O to the LBA can be remapped.

When you add a segment manager to a disk, the segment manager needs to change the basic layout of the disk. This change means that some sectors are reserved for metadata and the remaining sectors are made available for creating data disk segments. Metadata sectors are written to disk to save information needed by the segment manager; previous information found on the disk is lost. Before adding a segment manager to an existing disk, you must remove any existing volume management structures, including any previous segment manager.

When a new disk is added to a system, the disk usually contains no data and has not been partitioned. If this is the case, the disk shows up in EVMS as a compatibility volume because EVMS cannot tell if the disk is being used as a volume. To add a segment manager to the disk so that it can be subdivided into smaller disk segment objects, tell EVMS that the disk is not a compatibility volume by deleting the volume information.

If the new disk was moved from another system, chances are good that the disk already contains metadata. If the disk does contain metadata, the disk shows up in EVMS with storage objects that were produced from the existing metadata. Deleting these objects will allow you to add a different segment manager to the disk, and you lose any old data.

This section shows how to add a segment manager with EVMS.

EVMS initially displays the physical disks it sees as volumes. Assume that you have added a new disk to the system that EVMS sees as sde. This disk contains no data and has not been subdivided (no partitions). EVMS assumes that this disk is a compatibility volume known as /dev/evms/sde.

NOTE

In the following example, the DOS Segment Manager creates two segments on the disk: a metadata segment known as sde_mbr, and a segment to represent the available space on the drive, sde_freespace1. This freespace segment (sde_freespace1) can be divided into other segments because it represents space on the drive that is not in use.

To add the DOS Segment Manager to sde, first remove the volume, /dev/evms/sde:

Select Actions->Delete->Volume.

Select /dev/evms/sde.

Click Delete.

Alternatively, you can remove the volume through the GUI context sensitive menu:

From the Volumes tab, right click /dev/evms/sde.

Click Delete.

After the volume is removed, add the DOS Segment Manager:

Select Actions->Add->Segment Manager to Storage Object.

Select DOS Segment Manager.

Click Next.

Select sde

Click Add

To add the DOS Segment Manager to sde, first remove the volume /dev/evms/sde:

Select Actions->Delete->Segment Manager to Storage Object.

Select /dev/evms/sde.

Activate Delete.

Alternatively, you can remove the volume through the context sensitive menu:

From the Logical Volumes view, press Enter on /dev/evms/sde.

Activate Delete.

After the volume is removed, add the DOS Segment Manager:

Select Actions->Add->Segment Manager to Storage Object

Select DOS Segment Manager.

Activate Next.

Select sde.

Activate Add.

When a segment manager is removed from a disk, the disk can be reused by other plug-ins. The remove command causes the segment manager to remove its partition or slice table from the disk, leaving the raw disk storage object that then becomes an available EVMS storage object. As an available storage object, the disk is free to be used by any plug-in when storage objects are created or expanded. You can also add any of the segment managers to the available disk storage object to subdivide the disk into segments.

Most segment manager plug-ins check to determine if any of the segments are still in use by other plug-ins or are still part of volumes. If a segment manager determines that there are no disks from which it can safely remove itself, it will not be listed when you use the remove command. In this case, you should delete the volume or storage object that is consuming segments from the disk you want to reuse.

This section shows how to remove a segment manager with EVMS.

NOTE

In the following example, the DOS Segment Manager has one primary partition on disk sda. The segment is a compatibility volume known as /dev/evms/sda1.

Follow these steps to remove a segment manager with the GUI context sensitive menu:

From the Volumes tab, right click /dev/evms/sda1..

Click Delete.

Select Actions->Remove->Segment Manager from Storage Object.

Select DOS Segment Manager, sda.

Click Remove.

Follow these steps to remove a segment manager with the Ncurses interface:

Select Actions->Delete->Volume.

Select /dev/evms/sda1.

Click Delete.

Select Actions->Remove->Segment Manager from Storage Object.

Click Remove.

This chapter discusses when to use segments and how to create them using different EVMS interfaces.

A disk can be subdivided into smaller storage objects called disk segments. A segment manager plug-in provides this capability. Another reason for creating disk segments is to maintain compatibility on a dual boot system where the other operating system requires disk partitions. Before creating a disk segment, you must choose a segment manager plug-in to manage the disk and assign the segment manager to the disk. An explanation of when and how to assign segment managers can be found in Chapter 6. "Adding and removing a segment manager".

This section provides a detailed explanation of how to create a segment with EVMS by providing instructions to help you complete the following task:

To create a segment using the GUI, follow the steps below:

Select Actions->Create->Segment to see a list of segment manager plug-ins.

Select DOS Segment Manager. Click Next.

The next dialog window lists the free space storage objects suitable for creating a new segment.

Select sde_freespace1. Click Next.

The last dialog window presents the free space object you selected as well as the available configuration options for that object.

Enter 100 MB. Required fields are denoted by the "*" in front of the field description. The DOS Segment Manager provides default values, but you might want to change some of these values.

After you have filled in information for all the required fields, the Create button becomes available.

Click Create. A window opens to display the outcome.

Alternatively, you can perform some of the steps to create a segment from the GUI context sensitive menu:

From the Segments tab, right click on sde_freespace1.

Click Create Segment...

Continue beginning with step 4 of the GUI instructions.

To create a segment using Ncurses, follow these steps:

Select Actions->Create->Segment to see a list of segment manager plug-ins.

Select DOS Segment Manager. Activate Next.

The next dialog window lists free space storage objects suitable for creating a new segment.

Select sde_freespace1. Activate Next.

Highlight the size field and press spacebar.

At the "::" prompt enter 100MB. Press Enter.

After all required values have been completed, the Create button becomes available.

Activate Create.

Alternatively, you can perform some of the steps to create a segment from the context sensitive menu:

From the Segments view, press Enter on sde_freespace1.

Activate Create Segment.

Continue beginning with step 4 of the Ncurses instructions.

To create a data segment from a freespace segment, use the Create command. The arguments the Create command accepts vary depending on what is being created. The first argument to the Create command indicates what is to be created, which in the above example is a segment. The remaining arguments are the freespace segment to allocate from and a list of options to pass to the segment manager. The command to accomplish this is:

Create: Segment,sde_freespace1, size=100MB |

NOTE

The Allocate command also works to create a segment.

The previous example accepts the default values for all options you don't specify. To see the options for this command type:

query:plugins,plugin=DosSegMgr,list options |

This chapter discusses when and how to create a container.

Segments and disks can be combined to form a container. Containers allow you to combine storage objects and then subdivide those combined storage objects into new storage objects. You can combine storage objects to implement the volume group concept as found in the AIX and Linux logical volume managers.

Containers are the beginning of more flexible volume management. You might want to create a container in order to account for flexibility in your future storage needs. For example, you might need to add additional disks when your applications or users need more storage.

This section provides a detailed explanation of how to create a container with EVMS by providing instructions to help you complete the following task.

To create a container using the EVMS GUI, follow these steps:

Select Actions->Create->Container to see a list plug-ins that support container creation.

Select the LVM Region Manager. Click Next.

The next dialog window contains a list of storage objects that the LVM Region Manager can use to create a container.

Select sdc, sdd, and hdc from the list. Click Next.

Enter the name Sample Container for the container and 16MB in the PE size field.

Click Create. A window opens to display the outcome.

To create a container using the Ncurses interface, follow these steps:

Select Actions->Create->Container to see a list of plug-ins that support container creation.

Select the LVM Region Manager. Activate Next.

The next dialog window contains a list of storage objects that the LVM Region Manager can use to create the container.

Select sdc, sdd, and hdc from the list. Activate Next.

Press spacebar to select the field for the container name.

Type Sample Container at the "::" prompt. Press Enter.

Scroll down until PE Size is highlighted. Press spacebar.

Scroll down until 16MB is highlighted. Press spacebar.

Activate OK.

Activate Create.

The Create command creates containers. The first argument in the Create command is the type of object to produce, in this case a container. The Create command then accepts the following arguments: the region manager to use along with any parameters it might need, and the segments or disks to create the container from. The command to complete the previous example is:

Create:Container,LvmRegMgr={name="Sample Container",pe_size=16MB},sdc,sdd,hdc

|

The previous example accepts the default values for all options you don't specify. To see the options for this command type:

query:plugins,plugin=LvmRegMgr,list options |

Regions can be created from containers, but they can also be created from other regions, segments, or disks. Most region managers that support containers create one or more freespace regions to represent the freespace within the container. This function is analogous to the way a segment manager creates a freespace segment to represent unused disk space.

You can create regions because you want the features provided by a certain region manager or because you want the features provided by that region manager. You can also create regions to be compatible with other volume management technologies, such as MD or LVM. For example, if you wanted to make a volume that is compatible with Linux LVM, you would create a region out of a Linux LVM container and then a compatibility volume from that region.

This section tells how to create a region with EVMS by providing instructions to help you complete the following task.

To create a region, follow these steps:

Select Actions->Create->Region

Select the LVM Region Manager. Click Next.

NOTE

You might see additional region managers that were not in the selection list when you were creating the storage container because not all region managers are required to support containers.

Select the freespace region from the container you created in Chapter 8. "Creating a container ". Verify that the region is named lvm/Sample Container/Freespace. Click Next.

The fields in the next window are the options for the LVM Region Manager plug-in, the options marked with an "*" are required.

Fill in the name, Sample Region.

Enter 1000MB in the size field.

Click the Create button to complete the operation. A window opens to display the outcome.

Alternatively, you can perform some of the steps for creating a region with the GUI context sensitive menu:

From the Regions tab, right click lvm/Sample Container/Freespace.

Click Create Region.

Continue beginning with step 4 of the GUI instructions.

To create a region, follow these steps:

Select Actions->Create->Region.

Select the LVM Region Manager. Activate Next.

Select the freespace region from the container you created earlier in Chapter 8. "Creating a container ". Verify that the region is named lvm/Sample Container/Freespace.

Scroll to the Name field, and press spacebar.

Type Sample Region at the "::" prompt. Press Enter.

Scroll to the size field, and press spacebar.

Type 1000MB at the "::" prompt. Press Enter.

Activate Create.

Alternatively, you can perform some of the steps for creating a region with the context sensitive menu:

From the Storage Regions view, press Enter on lvm/Sample Container/Freespace.

Activate the Create Region menu item.

Continue beginning with step 4 of the Ncurses instructions.

Create regions with the Create command. Arguments to the Create command are the following: keyword Region, the name of the region manager to use, the region managers options, and the objects to consume. The form of this command is:

Create:region, LvmRegMgr={name="Sample Region", size=1000MB},

"lvm/Sample Container/Freespace" |

The LVM Region Manager supports many options for creating regions. To see the available options for creating regions and containers, use the following Query:

query:plugins,plugin=LvmRegMgr,list options |

This chapter discusses the EVMS drive linking feature, which is implemented by the drive link plug-in, and tells how to create, expand, shrink, and delete a drive link.

Drive linking linearly concatenates objects, allowing you to create larger storage objects and volumes from smaller individual pieces. For example, say you need a 1 GB volume but do not have contiguous space available of that length. Drive linking lets you link two or more objects together to form the 1 GB volume.

The types of objects that can be drive linked include disks, segments, regions, and other feature objects.

Any resizing of an existing drive link, whether to grow it or shrink it, must be coordinated with the appropriate file system operations. EVMS handles these file system operations automatically.

Because drive linking is an EVMS-specific feature that contains EVMS metadata, it is not backward compatible with other volume-management schemes.

The drive link plug-in consumes storage objects, called link objects, which produce a larger drive link object whose address space spans the link objects. The drive link plug-in knows how to assemble the link objects so as to create the exact same address space every time. The information required to do this is kept on each link child as persistent drive-link metadata. During discovery, the drive link plug-in inspects each known storage object for this metadata. The presence of this metadata identifies the storage object as a link object. The information contained in the metadata is sufficient to:

Identify the link object itself.

Identify the drive link storage object that the link object belongs to.

Identify all link objects belonging to the drive link storage. object

Establish the order in which to combine the child link objects.

If any link objects are missing at the conclusion of the discovery process, the drive link storage object contains gaps where the missing link objects occur. In such cases, the drive link plug-in attempts to fill in the gap with a substitute link object and construct the drive link storage object in read-only mode, which allows for recovery action. The missing object might reside on removable storage that has been removed or perhaps a lower layer plug-in failed to produce the missing object. Whatever the reason, a read-only drive link storage object, together logging errors, help you take the appropriate actions to recover the drive link.

The drive link plug-in provides a list of acceptable objects from which it can create a drive-link object. When you create an EVMS storage object and then choose the drive link plug-in, a list of acceptable objects is provided that you can choose from. The ordering of the drive link is implied by the order in which you pick objects from the provided list. After you provide a name for the new drive-link object, the identified link objects are consumed and the new drive-link object is produced. The name for the new object is the only option when creating a drive-link.

Only the last object in a drive link can be expanded, shrunk or removed. Additionally, a new object can be added to the end of an existing drive link only if the file system (if one exists) permits. Any resizing of a drive link, whether to grow it or shrink it, must be coordinated with the appropriate file system operations. EVMS handles these file system operations automatically.

This section shows how to create a drive link with EVMS:

To create the drive link using the GUI, follow these steps:

Select Actions->Create->Feature Object to see a list of EVMS features.

Select Drive Linking Feature.

Click Next.

Click the objects you want to compose the drive link: sde4 and hdc2.

Click Next.

Type dl in the "name" field

Click Create.

The last dialog window presents the free space object you selected as well as the available configuration options for that object.

Alternatively, you can perform some of the steps to create a drive link with the GUI context sensitive menu:

From the Available Objects tab, right click sde4.

Click Create Feature Object...

Continue creating the drive link beginning with step 2 of the GUI instructions. In step 4, sde4 is selected for you. You can also select hdc2.

To create the drive link, follow these steps:

Select Actions->Create->Feature Object to see a list of EVMS features.

Select Drive Linking Feature.

Activate Next.

Use spacebar to select the objects you want to compose the drive link from: sde4 and hdc2.

Activate Next.

Press spacebar to edit the Name field.

Type dl at the "::" prompt. Press Enter.

Activate Create.

Alternatively, you can perform some of the steps to create a drive link with the context sensitive menu:

From the Available Objects view, press Enter on sde4.

Activate the Create Feature Object menu item.

Continue creating the drive link beginning with step 4 of the Ncurses instructions. sde4 will be pre-selected. You can also select hdc2.

Use the create command to create a drive link through the CLI. You pass the "object" keyword to the create command, followed by the plug-in and its options, and finally the objects.

To determine the options for the plug-in you are going to use, issue the following command:

query: plugins, plugin=DriveLink, list options |

Now construct the create command, as follows:

create: object, DriveLink={Name=dl}, sde4, hdc2 |

A drive link is an aggregating storage object that is built by combining a number of storage objects into a larger resulting object. A drive link consumes link objects in order to produce a larger storage object. The ordering of the link objects as well as the number of sectors they each contribute is described by drive link metadata. The metadata allows the drive link plug-in to recreate the drive link, spanning the link objects in a consistent manner. Allowing any of these link objects to expand would corrupt the size and ordering of link objects; the ordering of link objects is vital to the correct operation of the drive link. However, expanding a drive link can be controlled by only allowing sectors to be added at the end of the drive link storage object. This does not disturb the ordering of link objects in any manner and, because sectors are only added at the end of the drive link, existing sectors have the same address (logical sector number) as before the expansion. Therefore, a drive link can be expanded by adding additional sectors in two different ways:

By adding an additional storage object to the end of the drive link.

By expanding the last storage object in the drive link.

If the expansion point is the drive link storage object, you can perform the expansion by adding an additional storage object to the drive link. This is done by choosing from a list of acceptable objects during the expand operation. Multiple objects can be selected and added to the drive link.

If the expansion point is the last storage object in the drive link, then you expand the drive link by interacting with the plug-in that produced the object. For example, if the link was a segment, then the segment manager plug-in that produced the storage object expands the link object. Afterwords, the drive link plug-in notices the size difference and updates the drive link metadata to reflect the resize of the child object.

There are no expand options.

Shrinking a drive link has the same restrictions as expanding a drive link. A drive link object can only be shrunk by removing sectors from the end of the drive link. This can be done in the following ways:

By removing link objects from the end of the drive link.

By shrinking the last storage object in the drive link.

The drive link plug-in attempts to orchestrate the shrinking of a drive-link storage object by only listing the last link object. If you select this object, the drive link plug-in then lists the next-to-last link object, and so forth, moving backward through the link objects to satisfy the shrink command.

If the shrink point is the last storage object in the drive link, then you shrink the drive link by interacting with the plug-in that produced the object.

There are no shrink options.

This chapter discusses snapshotting and tells how to create a snapshot.

A snapshot represents a frozen image of a volume. The source of a snapshot is called an "original." When a snapshot is created, it looks exactly like the original at that point in time. As changes are made to the original, the snapshot remains the same and looks exactly like the original at the time the snapshot was created.

Snapshotting allows you to keep a volume online while a backup is created. This method is much more convenient than a data backup where a volume must be taken offline to perform a consistent backup. When snapshotting, a snapshot of the volume is created and the backup is taken from the snapshot, while the original remains in active use.

Creating and activating a snapshot is a two-step process. The first step is to create the snapshot object. The snapshot object specifies where the saved data will be stored when changes are made to the original. The second step is to activate the object, which is to make an EVMS volume from the object.

You can create a snapshot object from any unused storage object in EVMS (disks, segments, regions, or feature objects). The size of this consumed object is the size available to the snapshot object. The snapshot object can be smaller or larger than the original volume. If the object is smaller, the snapshot volume could fill up as data is copied from the original to the snapshot, given sufficient activity on the original. In this situation, the snapshot is deactivated and additional I/O to the snapshot fails.

Base the size of the snapshot object on the amount of activity that is likely to take place on the original during the lifetime of the snapshot. The more changes that occur on the original and the longer the snapshot is expected to remain active, the larger the snapshot object should be. Clearly, determining this calculation is not simple and requires trial and error to determine the correct snapshot object size to use for a particular situation. The goal is to create a snapshot object large enough to prevent the shapshot from being deactivated if it fills up, yet small enough to not waste disk space. If the snapshot object is the same size as the original volume, or a little larger, to account for the snapshot mapping tables, the snapshot is never deactivated.

After you create a snapshot, activate it by making an EVMS volume from the object. After you create the volume and save the changes, the snapshot is active. The only option you have to specify for activating snapshots is the name to give the EVMS volume. This name can be the same as or different than the name of the snapshot object.

This section shows how to create a snapshot with EVMS:

To create the snapshot using the GUI, follow these steps:

Select Actions->Create->Feature Object to see a list of EVMS feature objects.

Select Snapshot Feature.

Click Next.

Select lvm/Sample Container/Sample Region.

Click Next.

Select /dev/evms/vol from the list in the "Volume to be Snapshotted" field.

Type snap in the "Snapshot Object Name" field.

Click Create.

Alternatively, you can perform some of the steps to create a snapshot with the GUI context sensitive menu:

From the Available Objects tab, right click lvm/Sample Container/Sample Region.

Click Create Feature Object...

Continue creating the snapshot beginning with step 2 of the GUI instructions. You can skip steps 4 and 5 of the GUI instructions.

To create the snapshot, follow these steps:

Select Actions->Create->Feature Object to see a list of EVMS feature objects.

Select Snapshot Feature.

Activate Next.

Select lvm/Sample Container/Sample Region.

Activate Next.

Press spacebar to edit the "Volume to be Snapshotted" field.

Highlight /dev/evms/vol and press spacebar to select.

Activate OK.

Highlight "Snapshot Object Name" and press spacebar to edit.

Type snap at the "::" prompt. Press Enter.

Activate Create.

Alternatively, you can perform some of the steps to create a snapshot with the context sensitive menu:

From the Available Objects view, press Enter on lvm/Sample Container/Sample Region.

Activate the Create Feature Object menu item.

Continue creating the snapshot beginning with step 6 of the Ncurses instructions.